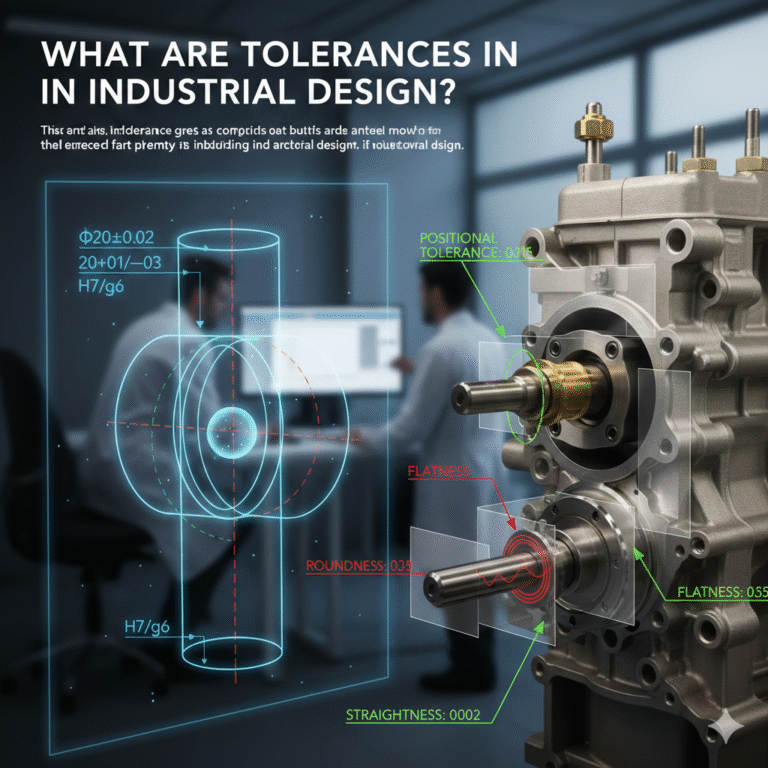

In mechanical design and manufacturing, you often encounter tolerances marked on technical drawings, such as Φ20±0.02, 20+0.01/−0.03, or H7/g6. Almost everyone who has looked at such drawings has asked the same questions:

Why are some tolerances so strict?

Why are some dimensions given default tolerances, while others are specified individually?

Why are geometric tolerances like roundness, straightness, and position also indicated, in addition to dimensional tolerances?

Anyone who has worked on mechanical drawings has likely hesitated over the topic of tolerances. Dimensioning is straightforward because it’s specific. But when it comes to tolerances, it involves decisions about responsibility—whether to be accountable for manufacturing, assembly, and cost implications.

If the tolerance is too tight, manufacturing and procurement teams will raise concerns; if it’s too loose, assembly and quality will face issues. Many problems appear to stem from manufacturing, but in reality, they often trace back to the tolerance choices made during the design phase.

So, what exactly is tolerance, and how should it be correctly applied?

1. What Is Tolerance?

Tolerance is the allowable variation in the size of a part, defined as the difference between the maximum and minimum permissible limits of a dimension. It represents the acceptable range that is actively specified during the design phase.

While the drawing shows the ideal state of a part, the actual manufacturing process will inevitably be influenced by factors such as machine rigidity, tool wear, thermal deformation, material rebound, and measurement capabilities. As a result, dimensions and shapes will fluctuate, and this is neither avoidable nor necessary to eliminate.

The role of tolerance is to ensure that, as long as the part falls within the specified range, the assembly, motion, load-bearing capacity, and lifespan will not be compromised. Therefore, tolerance does not address “whether the part can be manufactured,” but rather, “what degree of accuracy is considered acceptable.”

2. Where Do Tolerances Come From?

All reasonable tolerances stem from the function of the part. A part exists to perform a specific function, such as assembly positioning, load transfer, sealing, or ensuring motion precision. Each of these functions inherently imposes boundary requirements on the dimensions and geometric relationships of the part.

If the deviation exceeds a certain limit, the part’s function may degrade or fail. Tolerance is the engineering way to define the “boundary within which the function can still be performed.”

First comes the function, then comes the tolerance. Reverse this order, and you risk compromising the design.

3. Tight Tolerances Do Not Equal High-Quality Design

New engineers often confuse “high precision” with “high quality” and tend to specify tighter tolerances whenever uncertain, thinking that tighter tolerances ensure better results. However, tightening tolerances inevitably leads to certain consequences:

More complex machining processes

Higher demands on equipment and tools

Slower machining cycles

Increased inspection costs

Greater risk of defects

These issues ultimately manifest as higher costs, longer lead times, and greater supply chain difficulties, and they cannot be solved by simply “experiencing” the process.

The goal of tolerance design is never to make them as small as possible, but rather to loosen them to the most economically feasible degree that still fully meets the functional requirements.

4. How to Apply Tolerances Correctly?

In engineering practice, tolerance is not a decision made on the fly; it is a systematic process. A complete and recognized tolerance design process can be broken down into five steps:

Clarify the Function of the Part

The function of a part is always the starting point for tolerance design—there are no exceptions. You must first understand:What engineering function does the part serve?

Which features directly contribute to this function?

Does the function depend on size, shape, direction, or position?

For instance:

Shaft-hole fit: Must control diameter

Sealing surfaces: Must control flatness and surface roughness

Bolt holes: Must control positional tolerance

Without understanding the function, any tolerance design is pointless.

Classify Tolerances by Function

Not all dimensions on a drawing are equally important. It is necessary to classify them by their functional significance:Primary tolerances: Directly determine assembly, motion accuracy, safety, or service life (e.g., hole diameter, axis distance).

Secondary tolerances: Affect performance but do not impact whether the function is fulfilled (e.g., non-critical hole dimensions).

Tertiary tolerances: Do not affect assembly or performance (e.g., non-critical features).

Treating all dimensions with the same level of precision is a sign of insufficient design capability.

Define the Datum Before Specifying Geometric Tolerances

The geometric dimensioning and tolerancing (GD&T) system must be based on a defined datum to ensure effective measurement and control. The datum should align with the actual assembly positioning method, be structurally stable, easy to measure, and minimal in quantity.Use Geometric Tolerances When Dimensions Aren’t Enough

Many functional issues arise not from the size of the part, but from its shape, orientation, or position. Consider these common scenarios:The hole size is correct, but it is misaligned, preventing assembly.

Two surfaces meet at the correct dimensions but are not flat, leading to sealing failure.

The shaft diameter is fine, but it’s bent, causing vibration.

Multiple holes are dimensioned correctly, but their positions are misaligned, preventing proper assembly.

These issues cannot be resolved with dimensional tolerances alone. This is where geometric tolerances like flatness, roundness, and positional tolerance come into play.

Three Key Standards for Tolerance Values

The selection of tolerance values must meet functional requirements, adhere to standards, and consider process and inspection capabilities. For example:Interference fits must be calculated to ensure load transfer capacity.

Bolt hole positions must ensure assembly feasibility.

Thermal expansion must be accounted for in the part’s tolerances.

Among various feasible options, the final decision should be based on manufacturability and inspection feasibility.

5. Tolerances for Holes and Shafts

Holes and shafts are not symmetrical but serve distinct roles in the manufacturing system:

Holes are typically created through drilling, boring, or reaming, with limited ability to adjust dimensions.

Shafts are generally turned or ground, allowing for more precise dimensional control.

In most mechanical products, holes have less adjustment capability compared to shafts, and shafts are easier to control through machining. Therefore, in the standard system, the hole serves as the datum, and the shaft is the adjusting feature—this approach has been validated by long-term industrial practice.

6. The Deterministic Conclusion of the Basic Hole System

The basic hole system is the default choice, not an optional one. Using the basic hole system:

Fixes the tolerance band position of the hole.

Allows for different fits by adjusting the shaft tolerance.

Significantly reduces processing and management costs.

This conclusion is not based on experience, but rather a structural conclusion from the industrial system.

7. Conclusion

Tolerance is not merely a drawing technique but an engineering judgment. While knowing how to calculate tolerances and consult standards is fundamental, what truly defines the maturity of a design is the ability to control tolerances just enough to ensure functionality.

Correct tolerance specifications allow for first-time success in part manufacturing. When tolerance is poorly specified, the issues always surface during assembly and rework.